The GPT Site Admin: A New Era of AI Integration with WordPress

We are already witnessing various AI integrations with WordPress, primarily in terms of generating content or other limited application tools. Besides them there are chatbots, but they are as a rule of thumb, not well-integrated and operate outside the context.

One of the most significant advantages of Convoworks is its deep integration with the underlying system (WordPress), opening up a broad spectrum of possible usages. Combined with OpenAI function support, we can now have an AI assistant that can genuinely interact with the system.

OpenAI Functions

OpenAI has introduced GPT functions that allow bots to send commands to your code in the form of function call responses. These specialized responses enable your code to receive meaningful, well-structured instructions on what actions the system (our code) should execute. After execution, the results are sent back to GPT, which then formulates a response based on the outcomes of the function execution your code returned. This process establishes a communication channel, enabling the AI to interact with your system in a remarkably reliable way.

For GPT to be able to utilize these functions, we must define (in JSON Schema) all exposed functions and pass them along with the API request. Doing so contributes to the request size, preventing the registration of all functions simultaneously. It is feasible, and we plan to implement a specialized mechanism in the future that includes only the necessary function definitions for given tasks. However, this is not easy to implement and will take some time to be ready.

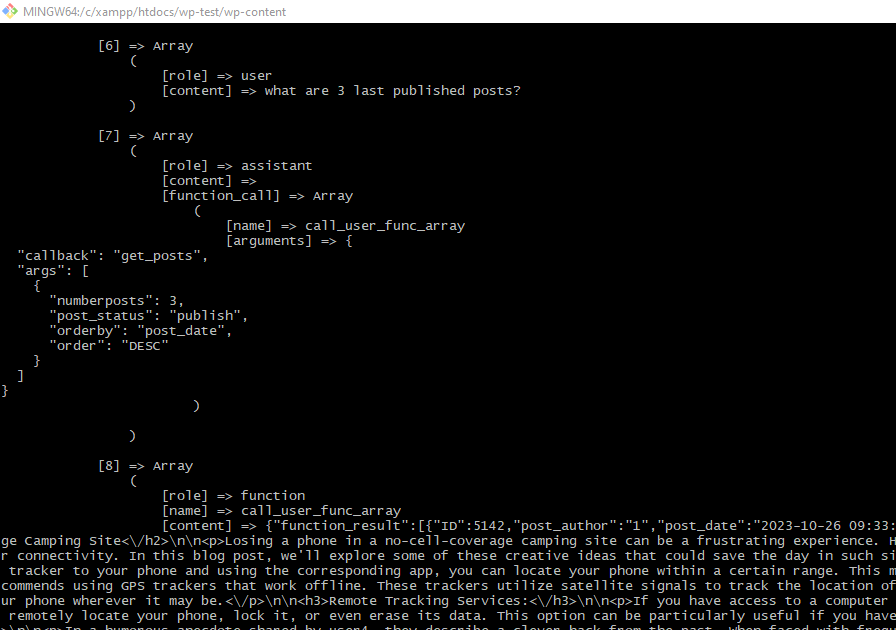

Operating within WordPress offers us a simpler alternative. PHP includes a call_user_func() function that allows the invocation of any PHP function. Since GPT possesses extensive knowledge of PHP (and WordPress) functions, providing it with only the call_user_func() definition enables the utilization of all available functions. This means that a comprehensive AI integration can be achieved using just a single function definition.

GPT Site Admin

We've created a GPT-powered chatbot that has access to the call_user_func_array() function. Although it's not perfect and comes with some quirks, it exemplifies what we can expect in the future – the extraordinary ability to communicate directly with your website!

This tool allows you to perform a multitude of tasks. For instance, you can check your latest posts, update them, modify their taxonomy, and upload images from external URLs. It also has the capability to read and modify files, among other functionalities, leveraging PHP and WordPress functions to access or modify data as necessary. Intriguingly, it can sequentially call multiple functions before generating a final response.

Usage Examples

Here are a few examples demonstrating how you can use it. These instances are meant to give you a rough idea of how it operates. Please note that the videos are edited, and the waiting time for a response in reality is considerably longer. In some cases, multiple GPT API calls are made before a response is ready, and occasionally, the GPT API may be slow.

.htaccess Update

In this example, we demonstrate how it can access and modify files. Initially, we ask it to check the existence of the htaccess file, display its contents, and ultimately, modify it by adding an access restriction for the debug.log file.

Hacker News API & New Blog Post

Hacker News has a simple API, which doesn’t require authentication and is available through straightforward GET requests, making it an excellent example. In most cases, GPT will use the file_get_contents() function to read data from the API, but we have also observed it using wp_remote_get() occasionally.

Initially, we ask the bot if it is familiar with the Hacker News API. This question serves as a warm-up and provides context. We then instruct it to load the top three stories and fetch five comments from, for example, the second story, and generate a blog post about it, inserting it into the database with appropriate tags. We limit the number of items to load (3, 5, etc.) due to the structure of the Hacker News API, which would include too many function calls in a row, potentially exceeding the maximum token limit.

Other Ideas

You can inquire about the installation status of the SOAP or some other PHP extension, the operating system in use, and the installed plugins. You have the option to ask it to load specific posts, update tags on them, or even attach images (from a URL) to them. Feel free to try and experiment. In some cases, you can give hints on what it should use or how it can solve potential problems.

Installation and Setup

Follow these simple steps to enable and try it on your own WordPress installation.

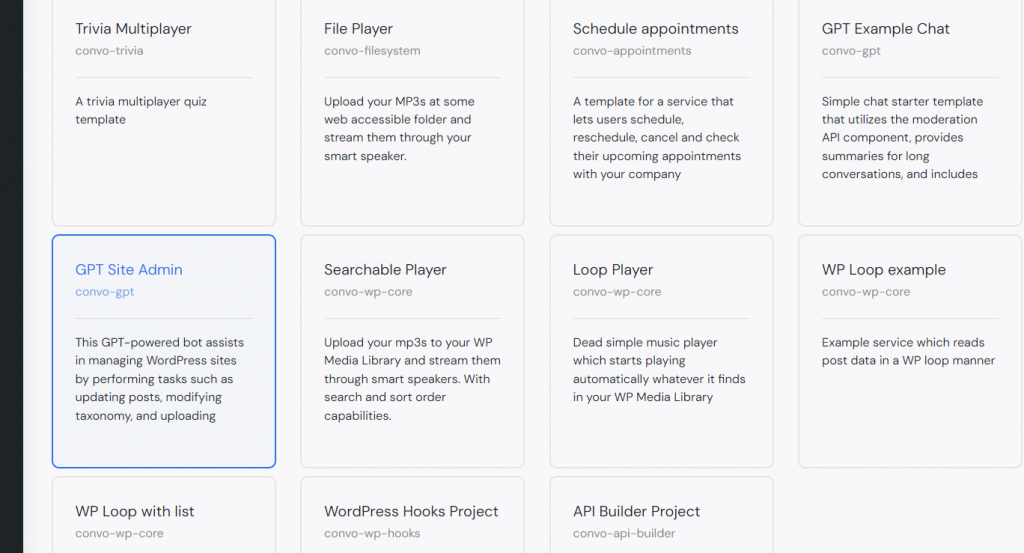

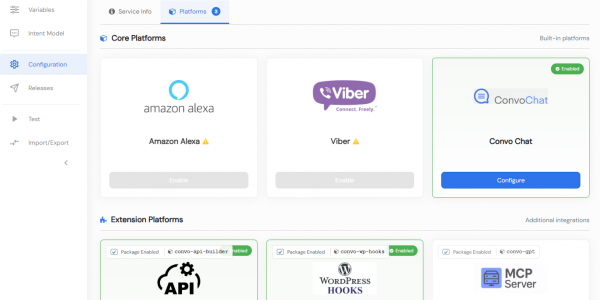

- Download and activate Convoworks WP (available through the plugin installer).

- Download and activate the Convoworks GPT package (available at https://github.com/zef-dev/convoworks-gpt/releases).

- In your WordPress admin, navigate to Convoworks WP and create a new service from the template GPT Site Admin.

- Set the

GPT_API_KEY(OpenAI API key) in the service's Variables view and save. - Navigate to the Test view in your newly created service and start using it!

Service Definition

This service is based on our previous example described in our blog post, Harnessing the Power of GPT Functions in Convoworks. Here, we will focus only on significant parts.

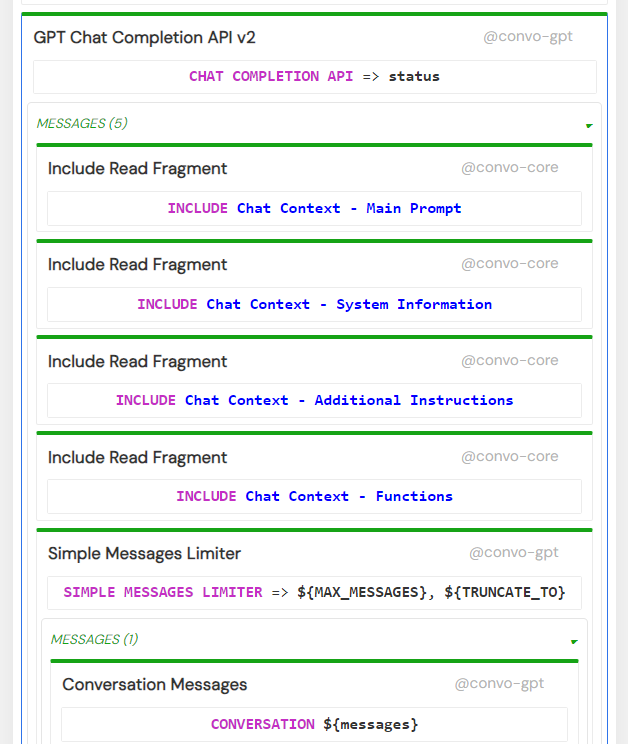

Prompt Structure

In our approach, we divided the system prompt (instructions for the bot) into several system messages. The primary one contains the main system prompt/instruction, while subsequent messages offer additional context such as current user info, current date/time, active themes, and active plugins. This separation allows us to easily toggle them on and off. For instance, in this example, system information is disclosed only to administrators.

Additionally, the system prompts can be used to describe specific tasks and how they should be executed. Check our example on setting the featured post image and add your own if you wish.

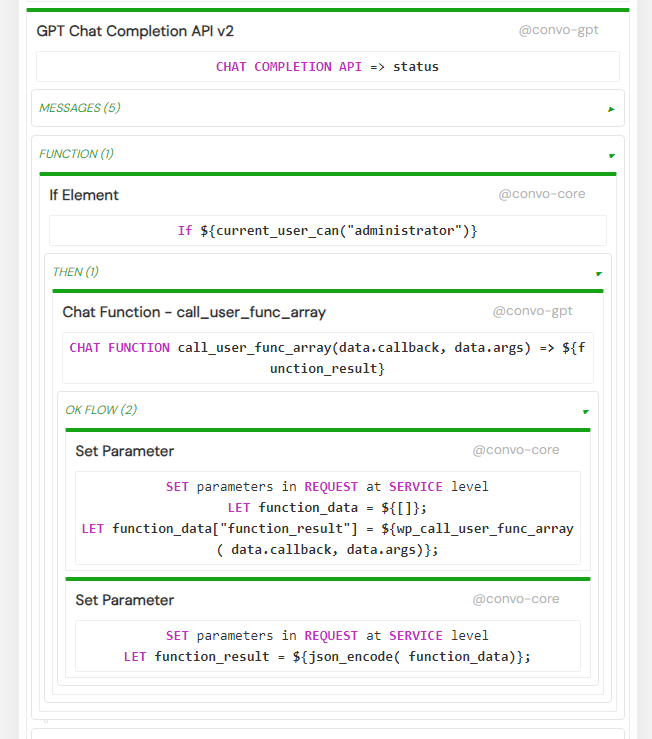

Defining Functions

In this instance, we utilize a singular function definition: "call_user_func_array". It is articulated through the Chat Function Element, which meticulously outlines the function and its arguments. Once activated, it executes sub-components in the OK flow. The OK flow offers insights into how the actual function call is managed and how it produces values. Notably, we invoke wp_call_user_func_array through expression language syntax, passing the necessary arguments to it. This function mirrors call_user_func_array, with a slight variation – it attempts to include specific WordPress files if the function is momentarily unavailable.

For a more comprehensive list of other functions usable in Convoworks, refer to the documentation for the core and wp core packages.

It’s noteworthy that in this service, function calls have been exclusively enabled for administrators.

Other Configuration Options

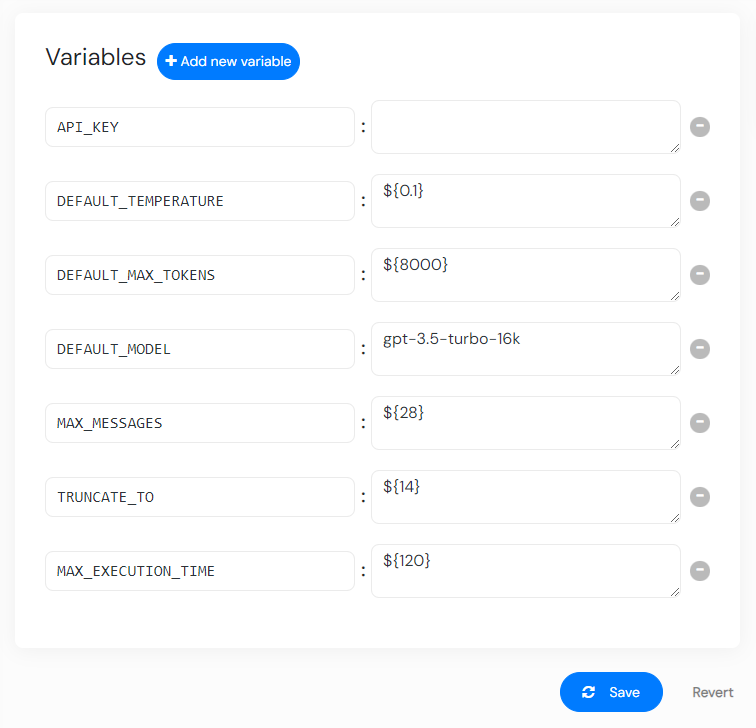

There are several more global variables you can adjust in the Variables view.

-

DEFAULT_MAX_TOKENS, DEFAULT_MODEL: The

gpt-3.5-turbo-16kmodel can support up to 16k tokens, while thegpt-4model is limited to 8k tokens. The default configuration values have been tailored to align with the capabilities of thegpt-3.5-turbo-16kmodel. If there is a plan to employ thegpt-4model, it is recommended to halve the values assigned toDEFAULT_MAX_TOKENS,MAX_MESSAGES, andTRUNCATE_TO. -

MAX_MESSAGES, TRUNCATE_TO: These parameters define the maximum number of messages that a conversation can hold. When this threshold is reached, the conversation content will be truncated. A summary of the truncated content will then be available in a separate additional message. These variables work in conjunction with the Messages Limiter Element.

-

MAX_EXECUTION_TIME: This setting determines the maximum execution time allowed for PHP to manage extended operations.

Operational Considerations

GPT may occasionally attempt to call non-existing functions or generate random values if an ID is absent. It might also try to load an excessive amount of data, which the API might find challenging to handle due to the model’s maximum token restrictions. There’s also a possibility that GPT could repeatedly execute a function that fails, causing it to become stuck. To address these issues, we have implemented a detection mechanism; the system will halt execution after three unsuccessful attempts.

GPT invokes functions using the JSON definition. However, the JSON generated by GPT can sometimes be malformed, causing the operation to fail.

A more in-depth understanding of its operations can be gleaned from debug.log. For additional information on debugging, visit Convoworks Debugging Log Files.

Since Language Models (LMs) are known for their inconsistency, we do not recommend executing update/delete commands on a production server. Spend some time understanding how it operates in a development environment before proceeding with such commands in a live setting.

Conclusion

This illustration primarily showcases the capabilities and potential of such integrative systems. While GPT manages to utilize call_user_func() effectively, supplying it with actual, predefined functions still tends to deliver more reliable and accurate results. Looking ahead, we can expect the evolution of dynamic context and task definitions along with their corresponding functions. Envision a scenario where a plugin autonomously registers its task instruction definition, thereby enabling seamless utilization through a chat interface.

Related posts

Convoworks WP 0.24 – A Faster, Cleaner Editor on the Road to Version 1

Convoworks WP 0.24.00 enhances the editor with faster navigation, improved component search, and refreshed help documentation. It introduces secure API key storage and service management improvements, while refining the editor’s design for better usability. Deprecated platforms have been removed to streamline the core.

VIEW FULL POST

Convoworks, 2025 – Status and Next Steps

Convoworks started as a voice-workflow tool, survived the collapse of smart-speaker hype, and is now evolving into a modular, AI-first framework for WordPress. This update explains the cleanup in progress, the move toward agent infrastructure and natural-language building, and how you can follow along while v1 takes shape.

VIEW FULL POST